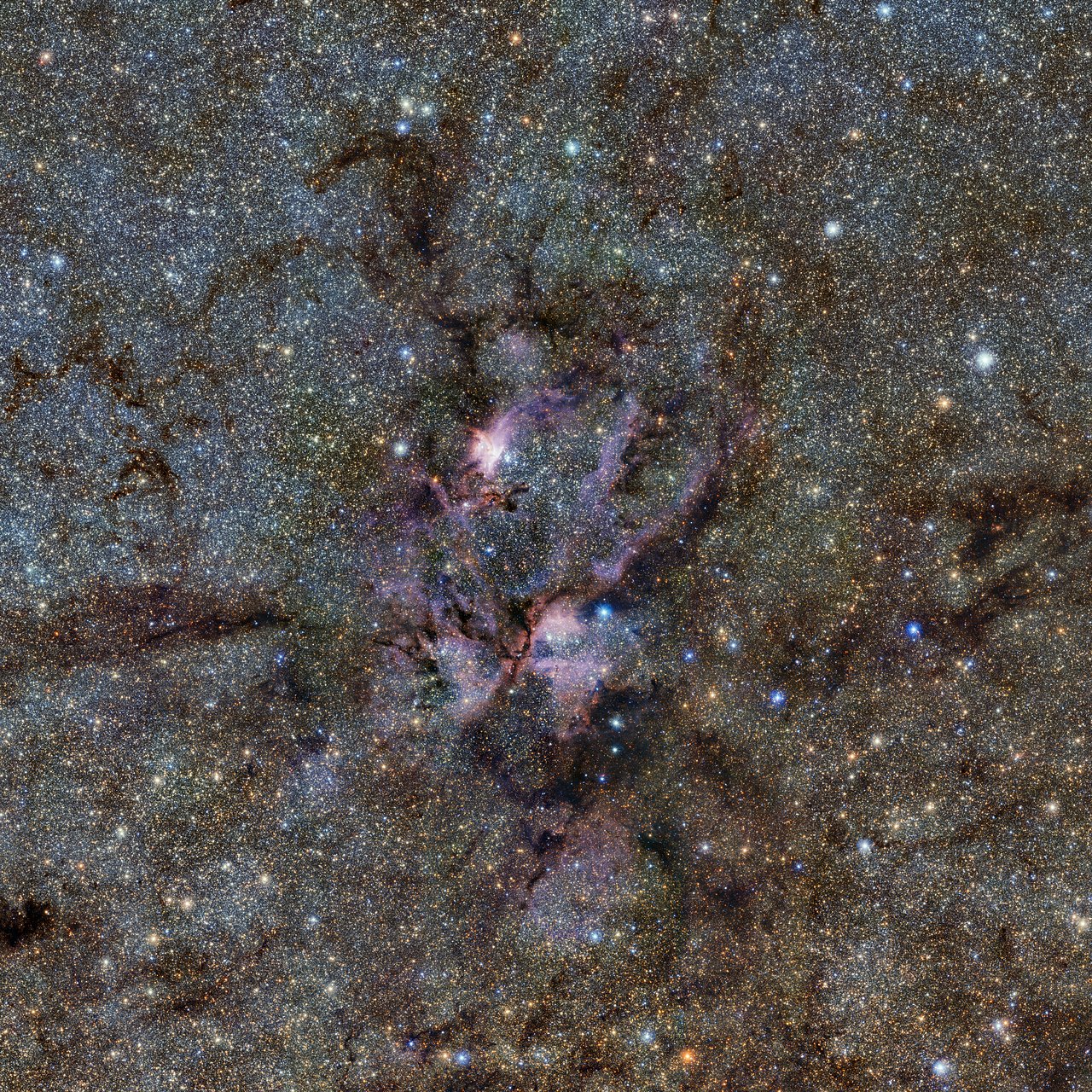

ESO Vista image

I've been shocked by A.I. before, only after some exposure and testing to see that all it really is reasoning ability abstracted and computerized. A.I. like computers in general only do things when told; when the task is finished, the A.I. just sits there waiting for the next command from an actual human.

As with the weapons debate, yes weapons kill but it's the people who pull the trigger; the people are the problem; this will apply to A.I. as well.

Besides the more serious abuse partly addressed above, accidents of A.I. going haywire are not likely - till A.I. becomes genuine consciouse feeling entities. I've yet to see any A.I. researcher come up with a good understanding of these things; they seem nowhere close to solving awareness.

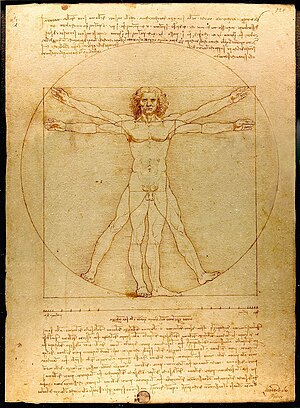

I do believe that A.I. will lead us to solving awareness; but, it will come from our failure to make A.I. conscious. For hundreds of years after the translations of Arab translations of Greek texts, people had hit on the idea of perpectual motion machines; the failure to do so eventually led to the science of thermodynamics; i of course think a similar thing will happen here. Godel's theorems will be key; why do humans and life in general overcome Godel's theorems while computers generally don't? I think I've hinted at this before somewhere in this blog - that of dynamical systems and chaos theory. The bifercation from one structure to a more complex organised structure is like the adding of a new axiom because the previous structures axiomatic description was not strong enough to deal with everything. And of course, chaos theory points to life's amazing ability to be spontaeneous and creative in unpredictable ways.

I still can't see the physical basis for all the theoretical evidence I've pointed out in this blog for Jacob Bronowski's ideas. Well, o.k. I had posted in the previous incarnation of this blog about Jacob Bronowski's ideas and A.I. I've suggested that in order for any lifeform to get around and eat properly, it has to do some kind of mathematics or science to say whether the food it thinks is food really is. I've seen a few times now(including the youtube of the A.I. robot above), that this is the case; that language is a kind of decodes units(or as Jacob Bronowski would call them, "infered units") from a process of generalisation, idealisation and abstraction.

No comments:

Post a Comment